In recent years, Artificial Intelligence (AI) has etched its way into the healthcare industry’s core, promising to revolutionize patient care and diagnostics. According to a report by Deloitte, 75% of large companies invested millions of dollars in AI, indicating a remarkable leap forward in the sector’s technological landscape.

However, the integration of AI in healthcare raises ethical dilemmas due to its ability to analyze vast amounts of data and predict outcomes with unprecedented accuracy. As we delve deeper into the seamless integration of AI in healthcare, we must confront critical questions about privacy, bias, and the balance between human precision.

This blog will explore the ethics of AI in healthcare and contemplate the responsible utilization of this game-changing technology for patients and society.

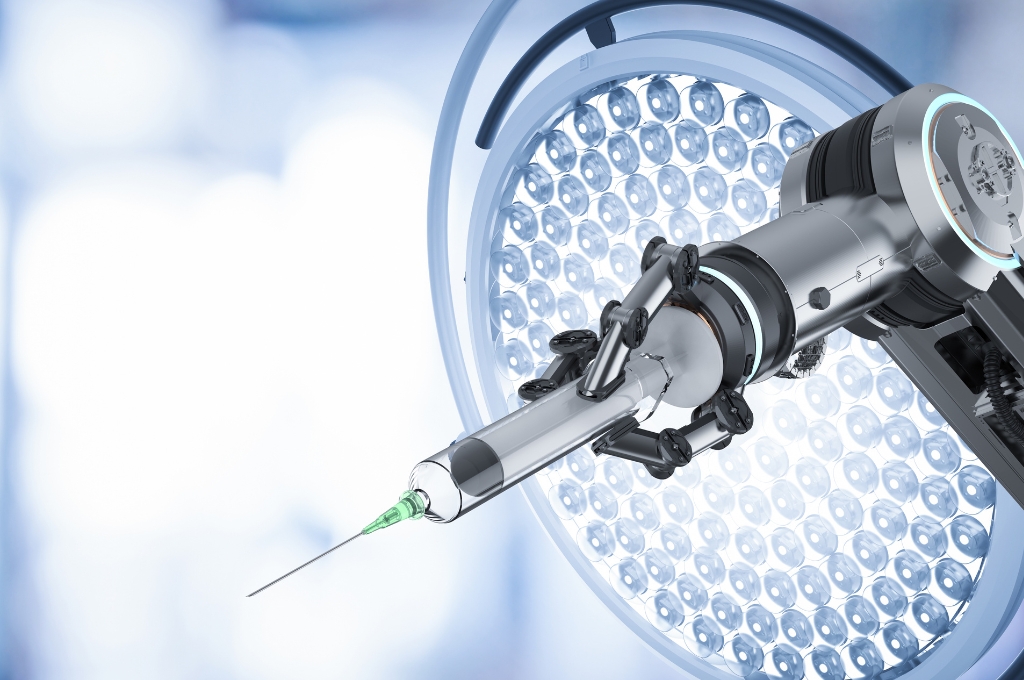

One of the most significant contributions of AI in healthcare lies in its ability to analyze vast amounts of patient data quickly and accurately. Machine learning algorithms can identify patterns and predict outcomes with a level of precision that was previously unimaginable, leading to improved diagnostics, personalized treatment plans, and enhanced patient care.

AI systems require access to sensitive patient data to function effectively, raising privacy and data security concerns. Patient information is highly confidential and must be safeguarded from unauthorized access or breaches.

To address these concerns, healthcare providers and AI developers must prioritize data protection by implementing robust encryption measures, adhering to compliance standards, and obtaining informed consent from patients to use their data for AI purposes.

AI algorithms are only as unbiased as the data they are trained on. If historical medical data used for training is biased, the AI system can perpetuate those biases, resulting in disparities in treatment and care.

For example, suppose a diagnostic algorithm is predominantly trained on data from a particular demographic group. In that case, it may not perform as accurately for others. Developers must strive to eliminate bias by using diverse and representative datasets and implementing fairness-aware algorithms.

The advancement of AI may lead to a temptation to rely heavily on autonomous systems, potentially replacing human expertise altogether. However, ethical concerns arise regarding the loss of the human touch and empathetic care that only human healthcare providers can offer.

AI should be viewed as a supportive tool rather than a complete replacement. The focus should be on augmenting human capabilities, fostering collaboration between AI systems and healthcare professionals, and ensuring that the final decisions always rest with human experts.

The rapid advancements in AI technology necessitate comprehensive regulations to govern its use in healthcare. Regulatory bodies must collaborate with AI developers and healthcare professionals to establish guidelines and standards that promote ethical practices and protect patient interests.

Additionally, accountability measures should be implemented to ensure that AI systems are transparent, explainable, and accountable for their decisions.

As we journey into a new era of healthcare powered by AI, the ethical considerations surrounding its integration cannot be overlooked. By prioritizing privacy, fairness, human expertise, and accountability, we can unlock AI’s full potential while ensuring patients’ well-being and the integrity of medical practices.

To further advance the development of ethical AI in healthcare, we invite you to join VirtuousAI’s IaaS Platform. Our platform empowers healthcare professionals and AI developers to create and deploy AI models with a strong focus on ethics.

Contact us today to gain access to the tools and resources needed to develop AI systems that are explainable, equitable, and reliable.

Comments